HCI

“Ads that Talk Back”: Implications and Perceptions of Injecting Personalized Advertising into LLM Chatbots

Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies

Brian Tang, Kaiwen Sun, Noah T. Curran, Florian Schaub, Kang G. Shin

Embedding personalized advertisements within chatbot responses. We developed a system that generates targeted ads in LLM chatbot conversations and conducted a user study to assess how ad injection impacts trust and response quality. Users struggle to detect chatbot ads, and undisclosed ads are rated more favorably, raising ethical concerns. Contribution: lead author.

“Ads that Talk Back”: Implications and Perceptions of Injecting Personalized Advertising into LLM Chatbots

Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies

Embedding personalized advertisements within chatbot responses. We developed a system that generates targeted ads in LLM chatbot conversations and conducted a user study to assess how ad injection impacts trust and response quality. Users struggle to detect chatbot ads, and undisclosed ads are rated more favorably, raising ethical concerns. Contribution: lead author.

Eye-Shield: Real-Time Protection of Mobile Device Screen Information from Shoulder Surfing

32nd USENIX Security Symposium (2023)

Brian Tang, Kang G. Shin

A novel defense system against shoulder surfing attacks on mobile devices. We designed Eye-Shield, a real-time software that makes on-screen content readable to the user but appear blurry to onlookers. Contribution: lead author.

Eye-Shield: Real-Time Protection of Mobile Device Screen Information from Shoulder Surfing

32nd USENIX Security Symposium (2023)

A novel defense system against shoulder surfing attacks on mobile devices. We designed Eye-Shield, a real-time software that makes on-screen content readable to the user but appear blurry to onlookers. Contribution: lead author.

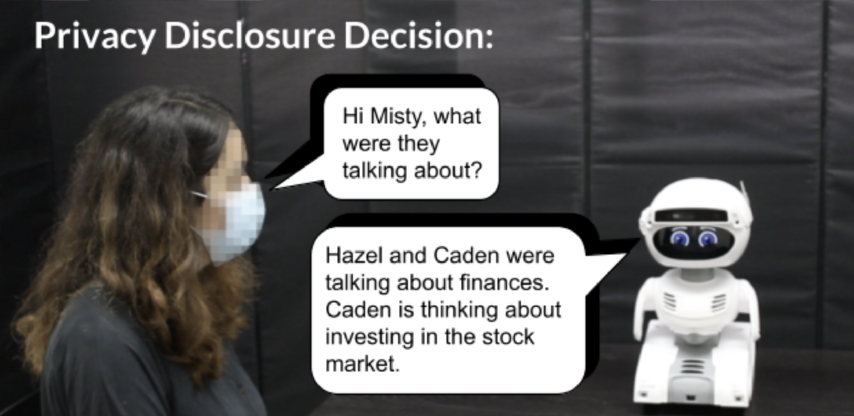

CONFIDANT: A Privacy Controller for Social Robots

17th ACM/IEEE International Conference on Human-Robot Interaction (2022)

Brian Tang, Dakota Sullivan, Bengisu Cagiltay, Varun Chandrasekaran, Kassem Fawaz, Bilge Mutlu

Exploring privacy management in conversational social robots. We developed CONFIDANT, a privacy controller that leverages various NLP models to analyze conversational metadata. Found that robots equipped with privacy controls are perceived as more trustworthy, privacy-aware, and socially aware. Contribution: lead author.

CONFIDANT: A Privacy Controller for Social Robots

17th ACM/IEEE International Conference on Human-Robot Interaction (2022)

Exploring privacy management in conversational social robots. We developed CONFIDANT, a privacy controller that leverages various NLP models to analyze conversational metadata. Found that robots equipped with privacy controls are perceived as more trustworthy, privacy-aware, and socially aware. Contribution: lead author.