Fairness Properties of Face Recognition and Obfuscation Systems

Sep 2, 22

Information

Authors

Harrison Rosenberg , Brian Tang, Kassem Fawaz , Somesh Jha

Conference

32nd USENIX Security Symposium (2023)

Blog

Intro

The proliferation of automated face recognition in various commercial and government sectors has caused significant privacy concerns for individuals. A recent, popular approach to address these privacy concerns is to employ evasion attacks against the metric embedding networks powering face recognition systems. Face obfuscation systems generate imperceptible perturbations, when added to an image, cause the face recognition system to misidentify the user. The key to these approaches is the generation of perturbations using a pre-trained metric embedding network followed by their application to an online system, whose model might be proprietary. This dependence of face obfuscation on metric embedding networks, which are known to be unfair in the context of face recognition, surfaces the question of demographic fairness – are there demographic disparities in the performance of face obfuscation systems?

Embedding Space Structure

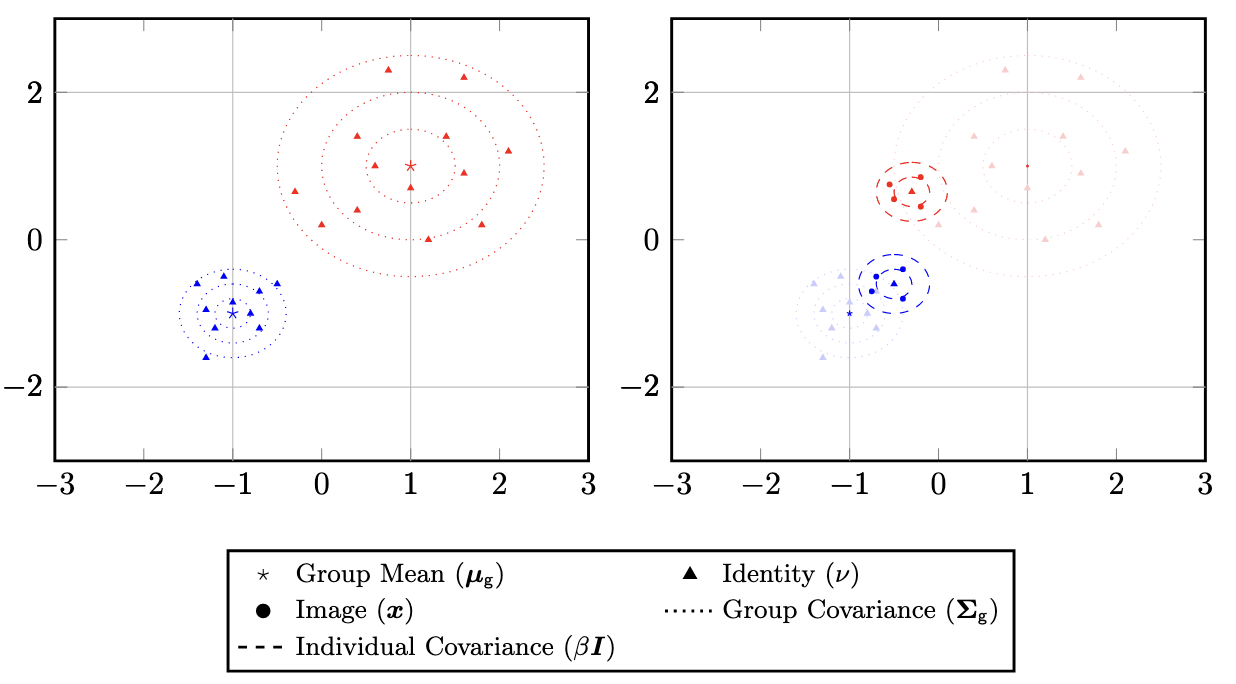

To address this question, we perform an analytical and empirical exploration of the performance of recent face obfuscation systems that rely on deep embedding networks. Intuitively, the embedding space is comprised of images belonging to identities (left) and identities belonging to larger demographic groups (right).

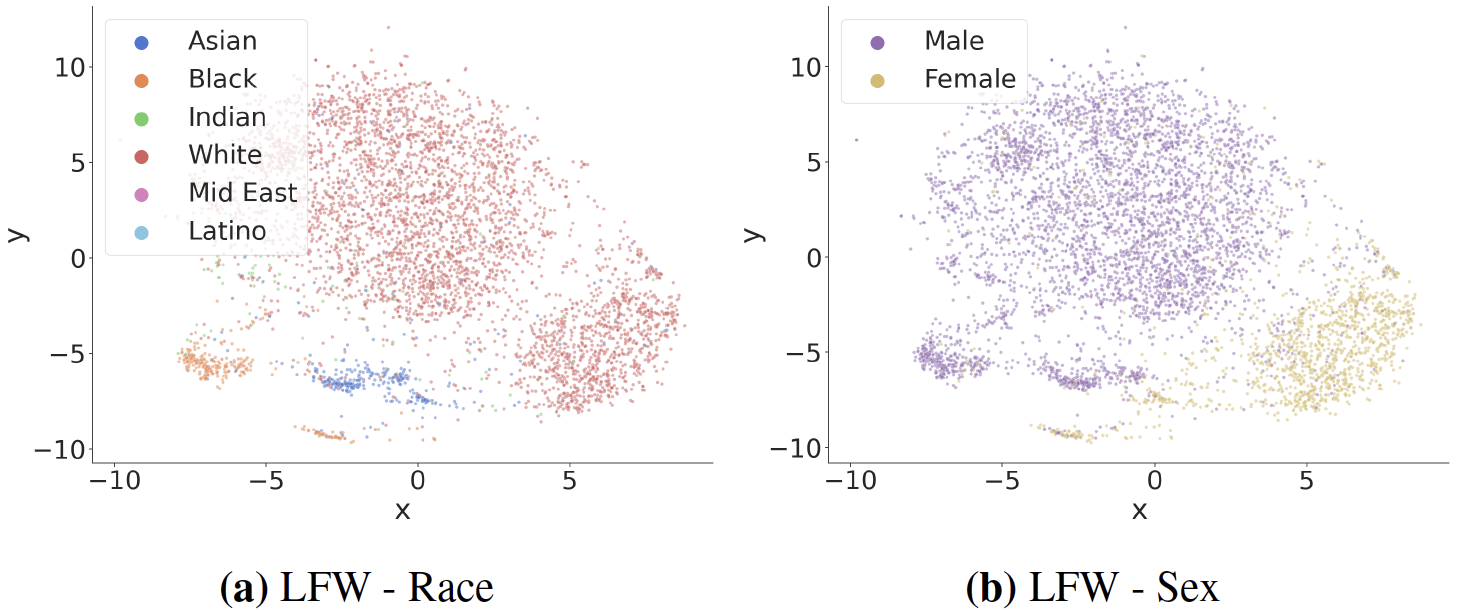

We find that metric embedding networks are demographically aware; they cluster faces in the embedding space based on their demographic attributes. Below is a visual representation of the embedding space using a dimensionality reduction technique known as t-SNE1.

Evaluation

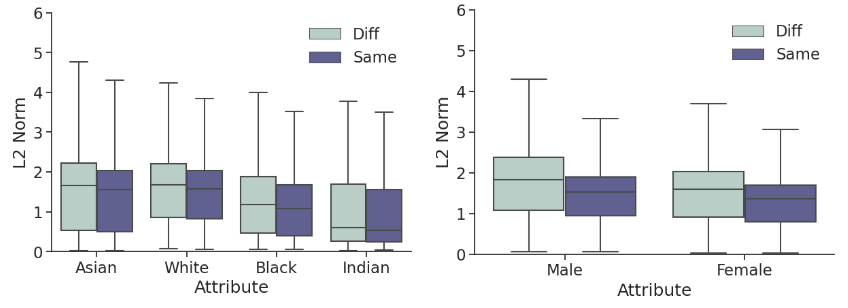

Adversarially perturbing these identities may require different robustness budgets2 depending on the individual’s demographic and the neighboring identities. We observe this in the disparities between L2 perturbation costs for the Carlini-Wagner attack3. Thus, this effect carries through to face obfuscation systems: faces belonging to minority groups incur reduced utility compared to those from majority groups.

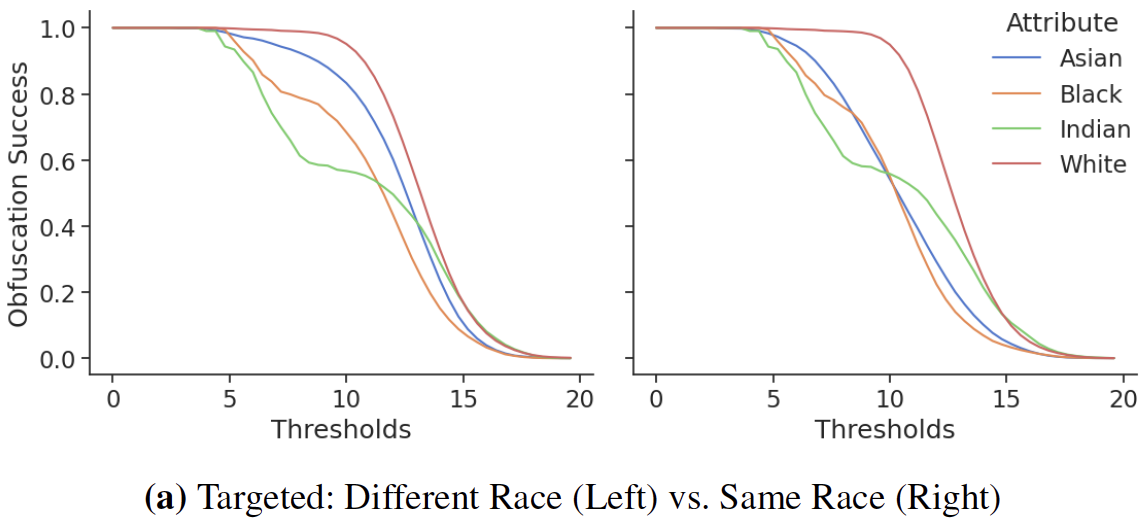

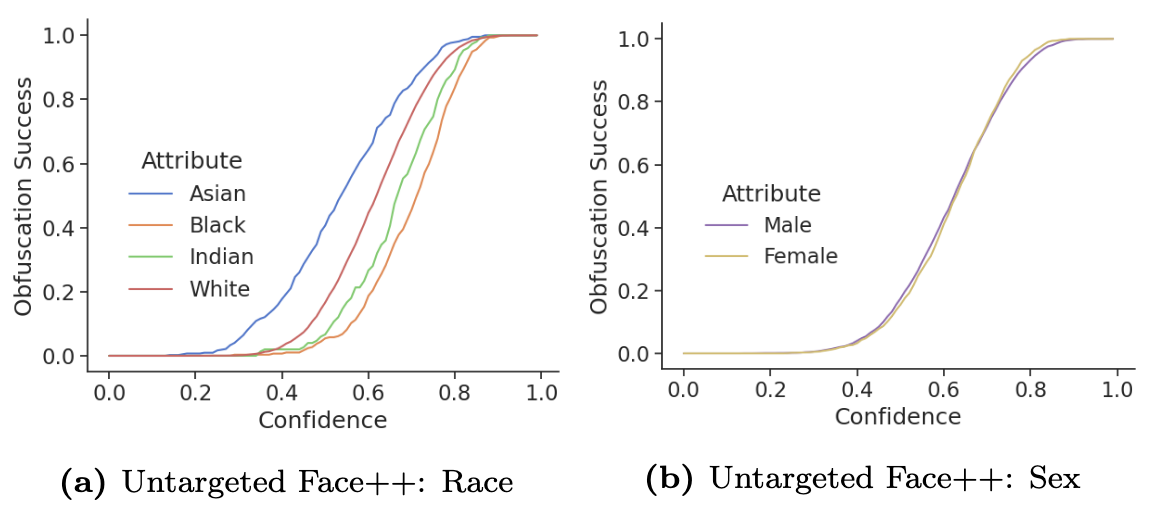

This disparity also exists in the adversarial strength of the attacks. Consider the following whitebox attacks on subsets of demographics - the performance discrepancies are vast.

For blackbox attacks, the average obfuscation success rate on the online Face++ API can reach up to 20 percentage points.

Root Cause Investigation

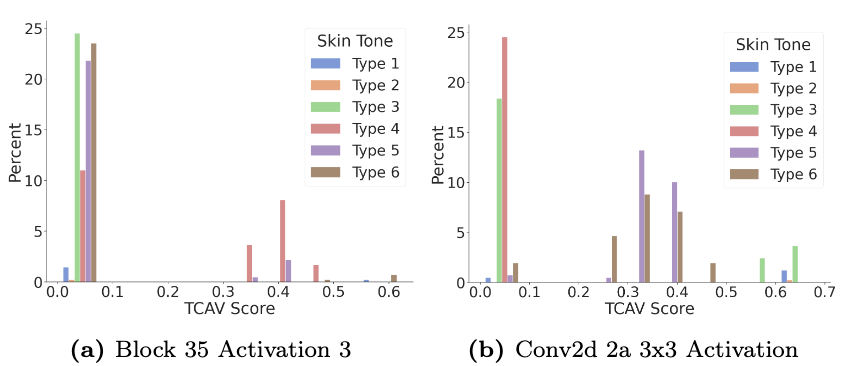

We utilized TCAV4 to investigate whether intermediate layers of the network learn to distinguish demographic attributes. After retrofitting the TCAV framework to metric embedding networks, we used skin tones as the demographic concepts and annotated images with the Fitzpatrick scale. The results demonstrate high utilization of skin tone concepts by two early layers in the network. Darker skin tones are learned separately (Conv2d 2a) than lighter skin tones (Block 35) suggesting that metric embedding networks differentiate between skin colors in the early layers.

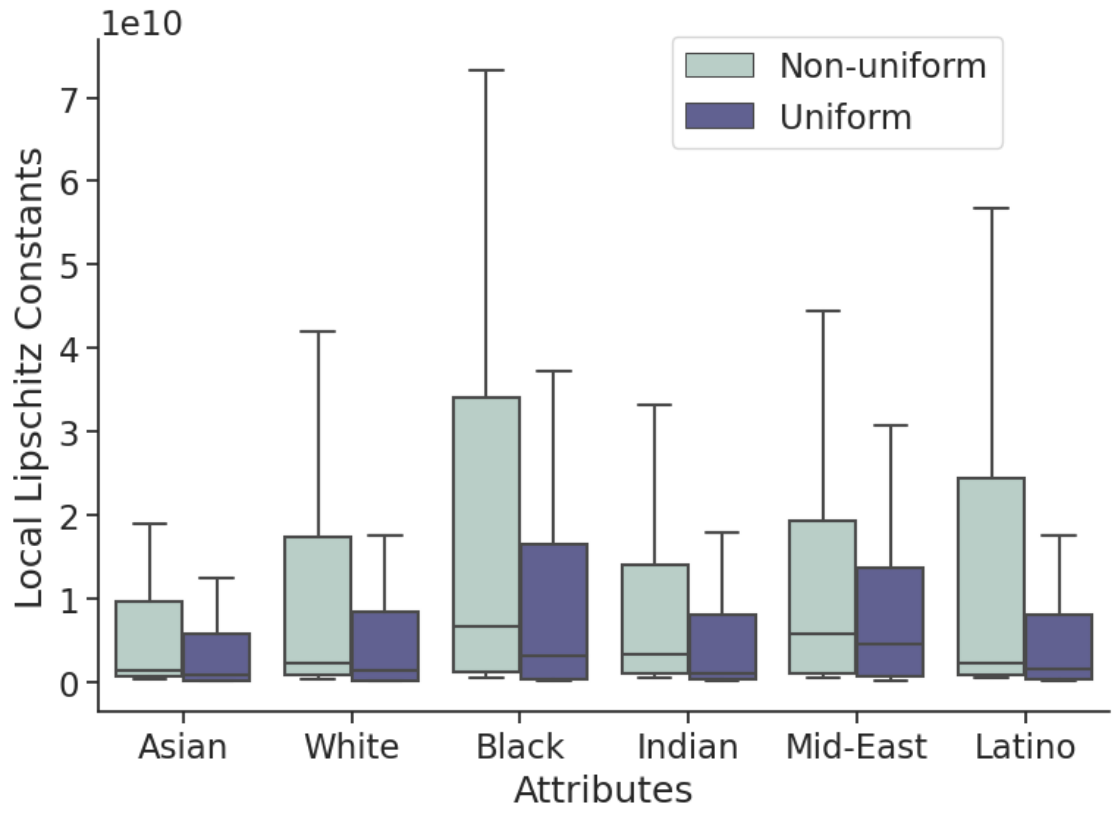

By examining estimates of the local Lipschitz constants, we investigate the stability of metric embedding networks in relation to the demographic distribution of their training sets. A classifier’s margin scales inversely with the Lipschitz constant, making classifiers with high local Lipschitz constants less stable and easier to attack56. We use the RecurJac 7 and Fast-Lin 8 bound algorithms to upper-bound local Lipschitz constants within small neural networks trained on datasets with uniform and non-uniform distributions of demographic groups.

Further, identities corresponding to minority demographic groups have larger upper bounds on local Lipschitz constants than do majority identities. These results suggest networks generalize worse for demographic groups which are a minority in the training set, thus networks are less robust to perturbation for certain demographics.

Citation

@inproceedings{rosenberg2023fairness,

title={Fairness Properties of Face Recognition and Obfuscation Systems},

author={Rosenberg, Harrison and Tang, Brian and Fawaz, Kassem and Jha, Somesh},

booktitle={32nd USENIX Security Symposium},

year={2023}

}

Relevant Links

https://arxiv.org/abs/2108.02707

References

-

Van der Maaten, L., & Hinton, G. (2008). Visualizing data using t-SNE. Journal of machine learning research, 9(11). Paper Link ↩︎

-

Nanda, V., Dooley, S., Singla, S., Feizi, S., & Dickerson, J. P. (2021, March). Fairness through robustness: Investigating robustness disparity in deep learning. In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (pp. 466-477). Paper Link ↩︎

-

Carlini, Nicholas, and David Wagner. “Towards evaluating the robustness of neural networks.” 2017 ieee symposium on security and privacy (sp). Ieee, 2017. Paper Link ↩︎

-

Kim, B., Wattenberg, M., Gilmer, J., Cai, C., Wexler, J., & Viegas, F. (2018, July). Interpretability beyond feature attribution: Quantitative testing with concept activation vectors (tcav). In International conference on machine learning (pp. 2668-2677). PMLR. Paper Link ↩︎

-

Neyshabur, B., Bhojanapalli, S., McAllester, D., & Srebro, N. (2017). Exploring generalization in deep learning. Advances in neural information processing systems, 30. Paper Link ↩︎

-

Virmaux, A., & Scaman, K. (2018). Lipschitz regularity of deep neural networks: analysis and efficient estimation. Advances in Neural Information Processing Systems, 31. Paper Link ↩︎

-

Zhang, H., Zhang, P., & Hsieh, C. J. (2019, July). Recurjac: An efficient recursive algorithm for bounding jacobian matrix of neural networks and its applications. In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 33, No. 01, pp. 5757-5764). Paper Link ↩︎

-

Weng, L., Zhang, H., Chen, H., Song, Z., Hsieh, C. J., Daniel, L., … & Dhillon, I. (2018, July). Towards fast computation of certified robustness for relu networks. In International Conference on Machine Learning (pp. 5276-5285). PMLR. Paper Link ↩︎